Abhijit Banerjee (L), Esther Duflo(M) and Michael Kremer (R). The Royal Swedish Academy of Sciences said this year's winners of Nobel Prize in Economics has introduced a new approach to obtaining reliable answers about the best ways to fight global poverty — Agency

Abhijit Banerjee (L), Esther Duflo(M) and Michael Kremer (R). The Royal Swedish Academy of Sciences said this year's winners of Nobel Prize in Economics has introduced a new approach to obtaining reliable answers about the best ways to fight global poverty — Agency  The Nobel Prize in Economic Sciences for 2019 went to three development economists - Abhijit Banerjee, Esther Duflo and Michael Kremer - for their contribution to spreading use of randomised controlled trials (RCTs) to understand poverty in developing countries. In the light of this year's prize in economics there will also be now a renewed interest outside academia in understanding RCTs. In this article, let me try to explain the RCTs, and what the issues are that we need to think about to consider RCT for policies and programmes.

The Nobel Prize in Economic Sciences for 2019 went to three development economists - Abhijit Banerjee, Esther Duflo and Michael Kremer - for their contribution to spreading use of randomised controlled trials (RCTs) to understand poverty in developing countries. In the light of this year's prize in economics there will also be now a renewed interest outside academia in understanding RCTs. In this article, let me try to explain the RCTs, and what the issues are that we need to think about to consider RCT for policies and programmes.

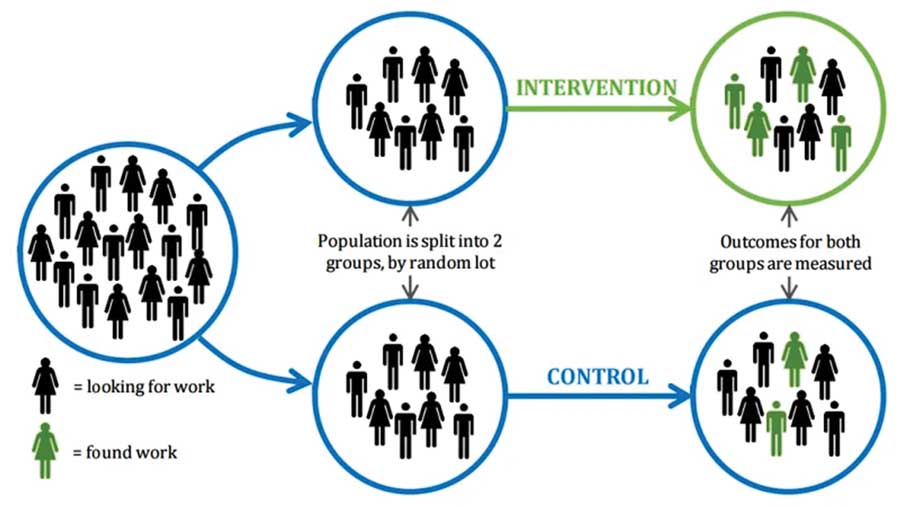

RCT is a simple but powerful tool to understand effectiveness of an intervention. In an RCT a group of people is randomly assigned for a specific intervention (called the treatment group), and then asked to compare with the group that did not receive that intervention (called control group). Because both the groups were selected randomly, difference in outcomes between the treatment and the control groups can be causally attributed to intervention. Thus, simple comparison of average outcome of the two groups provides a clean result for the effects of the intervention.

Why do we need RCTs? Consider a situation where we want to understand usefulness of a regular meeting between teachers and parents to help improve students' academic outcomes. On the surface, it seems obvious -providing information to parents about their children's academic progress would be beneficial. But the issue to consider here is: parents who are more likely to engage in child education are also more likely to come to schools to meet teachers. If we use survey (observational) data we might find strong association between parental presence at schools and children's scholastic achievement. But such association might not be causal - that is, we cannot really say if the higher test scores of students are due to meeting between parents and teacher.

In absence of an experiment, any outcome difference could be due to parental characteristics or unobserved factors such as parental motivation which we do not know. Hence, researchers using non-experimental evaluation need to rely on complicated statistical tools to address selection bias as parents who go to meeting are self-selected, and they might be different from the parents not attending the meeting. The main concern with this non-experimental approach is that results could change depending on the statistical technique used for the evaluation.

In RCTs, one could start intervention in a randomly selected school to implement this parent-teacher meeting, and then examine the student's outcomes after certain period. Because schools are selected randomly, on average, the schools that will be selected (treatment school) and not selected (control school) for intervention should have similar characteristics. Hence, RCTs provide an easy and intuitive way, because simple difference in average outcomes between treatment and control groups is the effect of such intervention.

In Bangladesh, academic researchers have conducted a large number of RCT programmes. One of the programmes evaluated recently and which received a lot of attention is the asset transfer programme of BRAC, known as ultra-poor programme. It has been replicated in a number of countries by BRAC and other NGOs, and this year's Nobel laureates were also in the team of researchers examining the programme in a variety of settings and contexts.

I have also conducted a number of RCTs in collaboration with BRAC, and another NGO (Global Development Research Initiative-GDRI) which ranges from evaluating an early childhood intervention, remedial education to providing free after-school tutoring for poor children in rural area, disseminating new agricultural practices which have shown significant yield gains in Africa and India among farmers, and teaching women financial literacy for their day-to-day activities. The opportunity to collaborate with local researchers, and NGO staff has provided invaluable experience that goes beyond RCTs as a tool such as learning about how it works in the field, and why certain interventions did not work despite best efforts.

I have also learned a lot talking to women about their financial behaviour, interactions with household members; farmers on their rice cultivation practices and how yields differ across different locations and farming practices; and children of what they think are their critical needs to improve their education. Despite living my entire childhood and adolescent in villages, I feel I learned something new talking to these people every time I visited them. The RCT movements also helped many researchers spending time with workers, household members, teachers and students who are often subjects of interests -to get understanding of what interventions might be suitable and in what contexts.

RCTs have been criticised on many grounds. Following this year's Nobel prize, there has been a lot of debates on the social media. The randomised experiments are very much common in medical trials or in public health research. In social science this is also not new - in fact many other researchers have used RCTs, and they date back to the era before these Nobel laureates started using RCTs for their research.

However, these laureates have popularised the RCTs for development programme evaluation over the last two decades. The 'new' RCT movements are primarily led by economists, most of whom were relying mostly on sophisticated econometrics tools and observational data which were generally collected by a third party. A large number of these economists are now travelling to developing countries, working with practitioners or NGOs to understand many policy-relevant issues. They are now spending time in the field to try to understand programmes to improve education and health outcomes, addressing questions such as what causes poverty to perpetuate, and what are the cost-effective ways to address challenges of reducing poverty, and how best to address food security. Hence, there has now been increasing dialogues between these academic researchers, practitioners and policymakers in many countries.

The main criticism about RCT is the relevance outside the experimental settings - whether the results hold in other contexts and its validity if we scale up intervention. This is a genuine concern. However, evaluation of many programmes such as the BRAC's ultra-poor programme, and replicating such programmes in a number of countries with different implementing agencies show that we can overcome such shortcomings.

RCTs are also often criticised for its lack of answers. If a programme is beneficial and highly effective then what are the underlying reasons? RCT researchers have come a long way, and have now been gathering survey data or/and bringing novelty in experimental design such as adding variation to treatment or population being exposed to treatment. A large data collection alongside cleverly designed RCTs now enables researchers to examine the mechanisms. For example, gains in test scores due to parent-teacher meetings observed in an RCT could be due to influence from parents and teachers, and may not come directly from information received from parent-teacher meetings. Teachers might put extra effort because of meeting with parents, or parents might put more efforts and resources to show more responsibility at home as they meet teachers. Hence, the formal meeting between teachers and parents might induce some other behavioural changes which might not be due to information the parents received from teachers in the meetings.

Therefore, one needs to understand how much increase in test scores of the students are directly due to information and how much are due to changes in parental monitoring or teachers' efforts or pedagogical changes. Children too might change their study habit knowing their parents will meet teachers. Hence a detailed data collection from parents, teachers and students would help to understand how the programme changed parental time allocation and resources they spent for their children, or students' presence in classroom, or changes in teachers' pedagogy.

Rapid expansion of RCTs by academics over the last two decades have propelled researchers to dig into survey data and other experimental approach to understand underlying reasons for success or failure of interventions. Academic journals are increasingly demanding from researchers to provide all possible mechanisms behind results. American Economic Association now hosts RCT trial registry for researchers conducting the RCT. This has been also a practice in the area of public health for long, and economists started a bit late in this process.

The basic design of a randomised controlled trial, shown with a test of a new 'back to work' programme

We now need to do pre-analysis plan to explain interventions, outcomes to be examined, any sub-group analysis (such as male or female difference) to be performed, and sample size and experimental design. Disclosing such information before intervention starts or endline survey, provides greater accountability and transparency, and reduces prospects of data mining. This analysis plan and registry have also pushed researchers to think more carefully about causes of effects, thinking theory behind results, before they can see final results.

We must bear in mind that the results from an evaluation using RCTs do not mean it can be a policy for the government. For example, a trial may find that a new drug for diabetic patients has no effect on the population. However, such drug might be very effective for subset of population. People who have diabetics also have other symptoms, and the drug might interact in a way that we do not observe any average effects. It could be that the drug works very well for some groups. Hence, we should not jump on conclusion that doctors should not prescribe that drug. Thus, policy responses could be different from the findings of an intervention using RCTs.

There are many other limitations of RCTs such as it is not suitable tool to address macro-level issues. One cannot randomise interest rates or inflation to examine, say, what happens when the central bank of a country increases interest rate. Also, evaluation is typically carried out at a small scale with careful monitoring and management of fieldwork. There is tendency among both researchers and practitioners to pay attention to every minor detail to make sure intervention goes in the right direction. Such is often not the case for a large-scale programme. What works at small scale because of micro-monitoring or management might not work at a larger scale. However, evidence carried out from smaller scale offers important piece of information about the programme or policy under the study.

While RCTs have many other shortcomings, it is a tool simple to understand, and hence easier to explain results to policymakers. Researchers using non-experimental evaluation are likely giving us less credible estimates than experimental approach. The RCT movement, led by these Noble laureates, has contributed to understanding the issues in development programmes better, at least by academics. We must also appreciate the contribution by NGOs and in some cases the government in helping us to understand efficacy of different interventions using experimental methods in the field. For example, the remedial education programme in India is led by NGO Pratham, and asset transfer programme are led by BRAC. These programmes, however, reached prominence, thanks to academics working with these NGOs.

Therefore, a platform that creates interactions amongst NGOs, researchers, and policymakers are to be seen as a positive step forward in understanding development economics better. Despite all its criticisms, RCT provides an important tool for us to examine many issues, particularly those related to understanding effectiveness of social policies and programmes.

One should never think or argue that RCT is a magic bullet, and it solves all the problems to understand causal effects of a programme. However, it is an important tool, and a credible one, which can be used to understand different issues of a programme - why a programme works, not merely whether it works or not, and how the programme works. Hence, we should embrace all other techniques to rigorously examine development programmes. Development economists should celebrate the Nobel prize this year, the debate on RCT should continue so we learn better from competing techniques. At the same time, we should reflect upon important issues that require going beyond using just a tool like RCT. Understanding causes and consequences of poverty and underdevelopment require much broader understanding than simply using a technique to establish causal effects.

Dr Asad Islam is a Professor at Department of Economics, Monash University, Australia.

asadul.islam@monash.edu

© 2026 - All Rights with The Financial Express